AlphaEarth Research

Geospatial Foundation Model

AlphaEarth Foundations

An Embedding Field Model for Global Earth Observation and Geospatial Foundation Intelligence

AlphaEarth Universal Translator

Discover how AlphaEarth transforms Earth observation data into a unified digital representation, enabling unprecedented insights across global landscapes.

AlphaEarth Universal Translator Overview

A comprehensive overview of the AlphaEarth foundation model and its applications in geospatial intelligence.

Generating thumbnail...

I. Executive Summary

A revolutionary foundation model that transforms Earth observation through unified embeddings and unprecedented global analysis capabilities.

1.1 Overview: Defining AlphaEarth Foundations

AlphaEarth Foundations (AEF) is a revolutionary geospatial foundation model that addresses one of the most pressing challenges in Earth observation: the overwhelming volume of data coupled with the scarcity of high-quality labels. Traditional approaches to Earth observation analysis require extensive domain expertise and bespoke modeling efforts for each specific application.

AEF solves this fundamental problem by creating a unified digital representation or "embedding field" for Earth's land and coastal waters. This breakthrough enables researchers, scientists, and practitioners to extract meaningful insights from satellite imagery without the need for extensive labeled datasets or specialized knowledge of each sensor system.

Key Problem Solved:

How do we transform the petabytes of Earth observation data being collected daily into actionable intelligence, when labeled ground truth data covers less than 1% of the planet?

Core Innovation: Embedding Fields

The fundamental breakthrough lies in AEF's creation of embedding fields - dense vector representations that capture the essential characteristics of any location on Earth within a unified 64-dimensional space.

Mathematical Foundation:

Each location on Earth is represented by a 64-dimensional vector projected onto the unit hypersphere:

Where e represents the embedding vector for location (x,y) at time t, constrained to the 63-dimensional unit sphere.

This mathematical framework enables efficient similarity calculations using simple dot products, making planetary-scale analysis computationally feasible for the first time.

1.2 Core Output: The Satellite Embedding Dataset

The primary output of AlphaEarth Foundations is the Satellite Embedding Dataset, containing annual embeddings from 2017 to 2024. This unprecedented dataset provides:

Scale & Coverage

Technical Specifications

Revolutionary Impact:

This dataset represents the first globally comprehensive, temporally consistent, and computationally efficient representation of Earth's surface dynamics, enabling applications that were previously impossible at planetary scale.

Performance Highlights

Research Significance

AlphaEarth Foundations represents a paradigm shift in Earth observation, moving from data-scarce, application-specific models to a unified, foundation model approach. This research demonstrates that it is possible to create general-purpose representations of Earth's surface that outperform specialized models across diverse applications, from crop monitoring in Africa to land use classification in Europe.

This work lays the foundation for democratizing Earth observation analysis, making sophisticated geospatial intelligence accessible to researchers and practitioners worldwide.

II. Introduction: The Need for Geospatial Foundation Models

Understanding the unprecedented challenges and opportunities in Earth observation data that necessitate a foundation model approach.

2.1 Context: The Earth Observation Data Revolution

We are experiencing an unprecedented revolution in Earth observation capabilities. Modern satellite constellations, including the European Space Agency's Copernicus program, NASA's Earth Observing System, and numerous commercial providers, generate petabytes of data annually with ever-increasing temporal frequency and spatial resolution.

Current Earth Observation Data Scale

This data complexity extends beyond simple volume. Modern Earth observation systems capture information across multiple dimensions:

- Spectral diversity: From optical RGB to hyperspectral imaging with hundreds of bands

- Temporal dynamics: Sub-daily to multi-decadal time series

- Spatial scales: From individual trees to continental patterns

- Sensor heterogeneity: Passive optical, active radar, LiDAR, and thermal systems

The Multimodal Challenge:

Traditional analysis methods struggle to integrate this heterogeneous data effectively, leading to underutilization of the available information and missed scientific insights.

2.2 The Label Scarcity Paradox

Despite the abundance of Earth observation data, deriving meaningful insights remains severely constrained by the scarcity of high-quality ground-based labels. This fundamental paradox—data rich but label poor—creates a bottleneck that limits the potential of Earth observation for addressing global challenges.

The Ground Truth Challenge

The mathematical implications of this scarcity are severe:

This scarcity leads to several critical limitations:

Methodological Limitations

- • Bespoke modeling for each application

- • Limited transferability across regions

- • Overfitting to small training datasets

- • Inability to leverage global patterns

Scientific Constraints

- • Biased sampling toward accessible regions

- • Temporal inconsistency in observations

- • Limited cross-domain knowledge transfer

- • Underrepresentation of global diversity

2.3 AlphaEarth Foundations: A Paradigm Shift

AlphaEarth Foundations represents a fundamental paradigm shift from traditional, application-specific Earth observation models to a foundation model approach. This approach addresses the core challenges through several key innovations:

Figure 1: Embedding Fields Paradigm

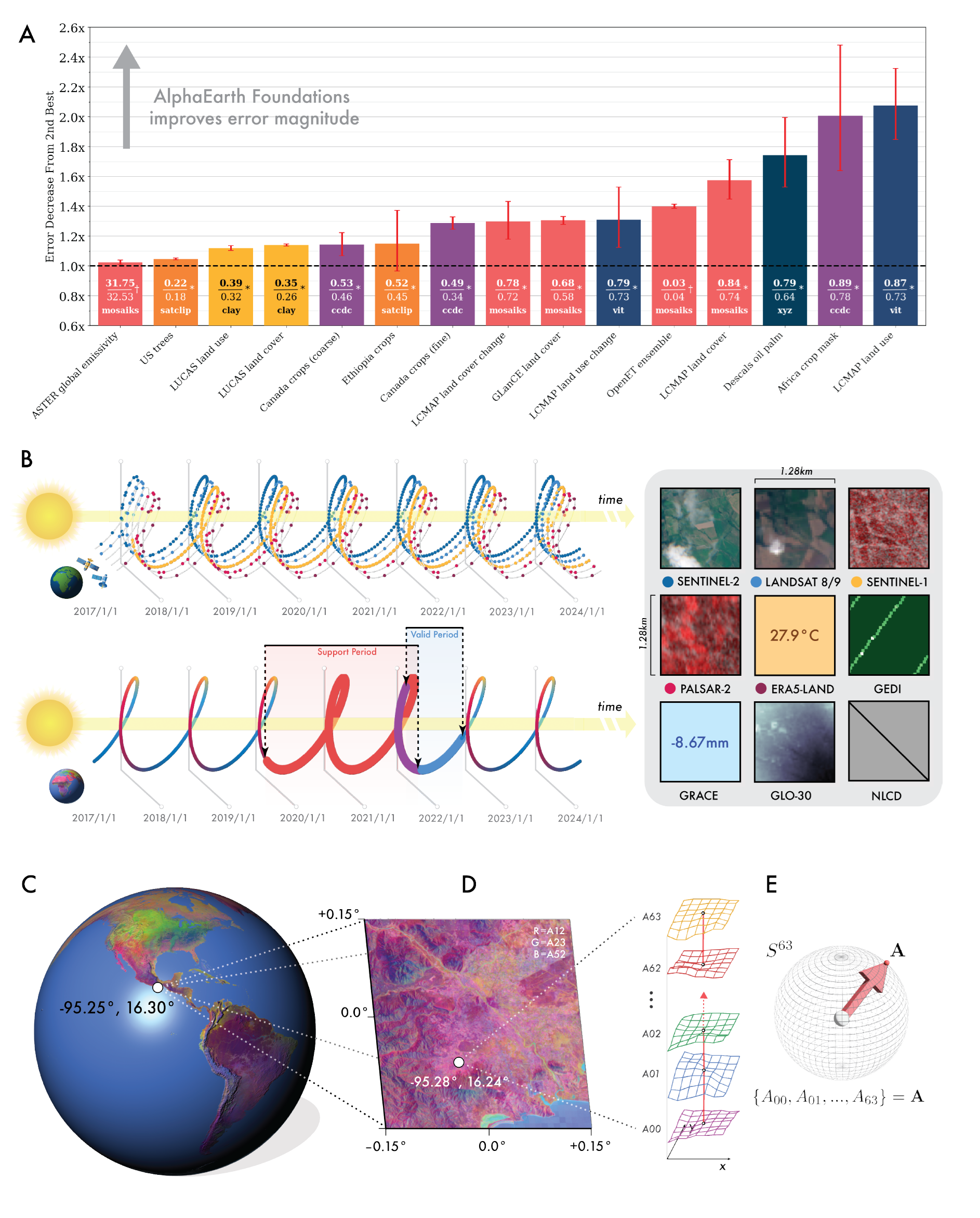

(A) Error ratios across evaluations from next-best model/dataset to AlphaEarth Foundations showing consistent outperformance. (B) AEF reconciles multiple sparse observation records into continuous records regardless of availability fluctuations. (C) Global embedding field for 2023 showing apparent climatic gradients at large scales. (D) High-resolution features at 10m² resolution in Oaxaca, Mexico. (E) 64-dimensional embedding field structure mapping to coordinates on unit sphere S⁶³.

Foundation Model Advantages

Universal Feature Space

Creates a unified representation that works across all Earth observation applications

Self-Supervised Learning

Leverages the inherent structure in Earth observation data without requiring labels

Global Scale Training

Trained on planetary-scale data to capture global patterns and regional variations

Transfer Learning

Enables rapid adaptation to new tasks with minimal additional training

Performance Breakthrough

AEF consistently outperforms both traditional designed features and domain-specific learned features across diverse evaluation scenarios:

Revolutionary Impact:

By creating a universal feature space for Earth observation, AEF democratizes access to sophisticated geospatial analysis, enabling researchers without extensive domain expertise to extract meaningful insights from satellite imagery.

Positioning in the Research Landscape

AlphaEarth Foundations builds upon decades of research in remote sensing, computer vision, and machine learning, but represents the first successful application of foundation model principles to global Earth observation. This work bridges the gap between the success of foundation models in natural language processing and computer vision with the unique challenges of geospatial data.

The development of AEF is particularly timely as the Earth observation community faces increasing pressure to deliver actionable insights for climate adaptation, biodiversity conservation, and sustainable development goals. By providing a unified analytical framework, AEF enables the Earth observation community to move beyond data collection toward comprehensive Earth system understanding.

III. Data Ingestion & Preprocessing

Transforming diverse Earth observation data streams into unified, analysis-ready formats through sophisticated preprocessing and multi-source fusion techniques.

3.1 Multi-Source Data Fusion Architecture

AlphaEarth Foundations integrates information from dozens of different public sources, creating an unprecedented fusion of Earth observation data. This multi-source approach ensures comprehensive coverage of Earth's surface dynamics while maintaining temporal consistency and spatial coherence across diverse sensor modalities.

Primary Data Sources

Optical Imagery

SAR/Radar Data

Specialized Data

Auxiliary & Semantic Sources

Meteorological Data

Semantic Anchoring

3.2 Training Dataset Scale and Composition

The AlphaEarth training dataset represents an unprecedented scale of Earth observation data integration, bringing together multiple decades of satellite observations into a coherent, analysis-ready format.

Dataset Composition & Scale

Temporal Structure

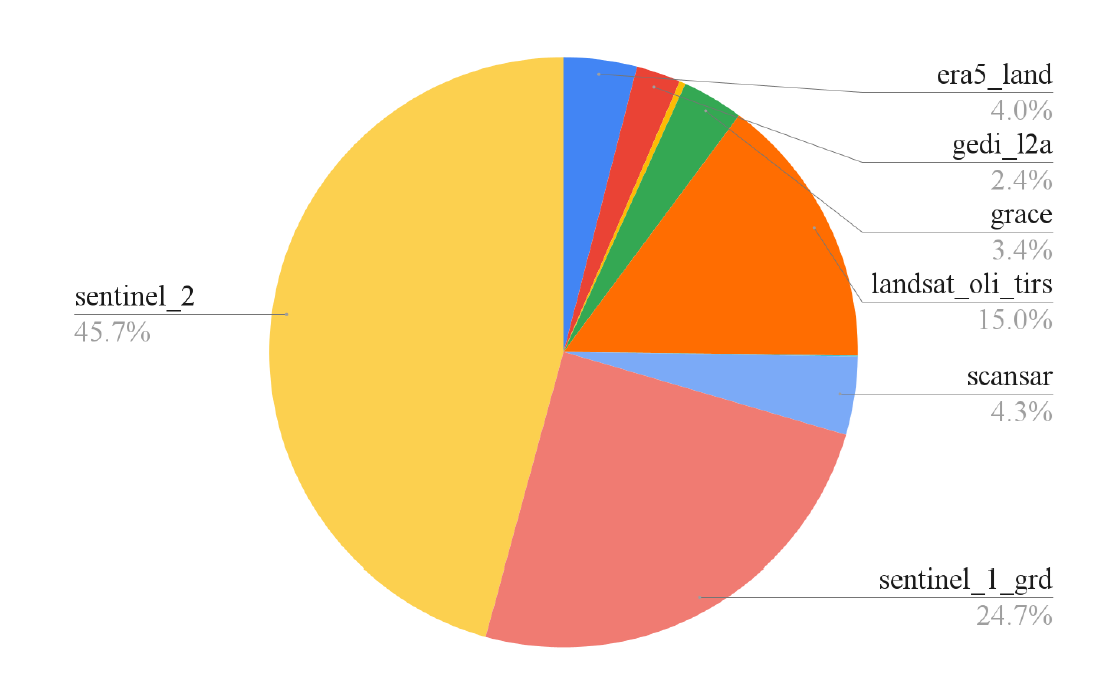

Figure S2: Breakdown of Image Samples by Sensor

Distribution of training data across different satellite sensors showing Sentinel-2 as the primary source (45.7%), followed by Sentinel-1 GRD (24.7%), and other sensors including Landsat OLI TIRS (15.0%), SCANSAR (4.3%), ERA5 Land (4.0%), GEDI 12A (2.4%), and GRACE (3.4%). Total dataset comprises ~6PiB after replication across the temporal dimension.

Mathematical Representation

Each video sequence can be mathematically represented as:

Where Vi represents video sequence i, and Ii,t represents the multi-spectral image at location i and time t.

3.3 Data Preparation: The "Stems" Architecture

The initial preprocessing pipeline, known as the "Stems" architecture, handles the complex task of harmonizing diverse sensor data into a unified format suitable for foundation model training.

Source-Specific Encoders

Each sensor system requires specialized preprocessing to account for its unique characteristics:

Spectral Harmonization

Different sensors capture different spectral bands. AEF uses dedicated encoders (CNN/ViT architectures) to project sensor-specific band configurations into a shared latent space.

Temporal Alignment

Satellite observations are irregular and asynchronous. The preprocessing pipeline creates consistent temporal sequences by interpolating and aligning observations to a regular 5-day interval grid.

Spatial Registration

Precise georeferencing ensures that observations from different sensors align spatially. This includes correction for sensor-specific geometric distortions and co-registration to a common coordinate system.

Mathematical Framework

The stems architecture can be formalized as a set of sensor-specific encoders:

Where fs represents the encoder for sensor s, transforming raw sensor data with Cs bands into a unified C=96 channel representation.

3.4 Handling Sparsity and Asynchrony

Real-world Earth observation data is inherently sparse and asynchronous. AEF addresses these fundamental challenges through sophisticated fusion techniques that maintain temporal consistency while maximizing information utilization.

Data Sparsity Challenges

Temporal Sparsity

Spatial Sparsity

Missing Data Fusion Strategies

Cross-Sensor Attention Mechanism

When data from one sensor is missing, AEF uses attention mechanisms to leverage information from other sensors that observed the same location at similar times.

Masked Concatenation

For simpler cases, AEF employs mask-aware concatenation that explicitly tracks which sensors contributed data at each time step.

Where Mi represents the availability mask for sensor i.

Temporal Consistency Preservation

Despite irregular observations, AEF maintains temporal consistency through:

Innovation Impact:

By successfully handling sparse and asynchronous data, AEF makes it possible to create consistent global representations from inherently inconsistent observations, unlocking the full potential of the Earth observation data archive.

IV. Model Architecture

Understanding the sophisticated neural architecture that transforms diverse Earth observation data into unified embedding representations.

4.1 Core Philosophy: Virtual Satellite Intelligence

The AlphaEarth architecture embodies the concept of a "virtual satellite" that synthesizes information from multiple real satellites into a coherent, unified understanding of Earth's surface. Unlike traditional approaches that process individual satellite images independently, AEF operates on the principle of holistic integration.

Architectural Principles

Virtual Satellite Concept:

AEF functions as if it were a single, omniscient satellite that can observe the entire Earth simultaneously across all spectral bands and temporal moments, synthesizing this information into optimal representations for analysis.

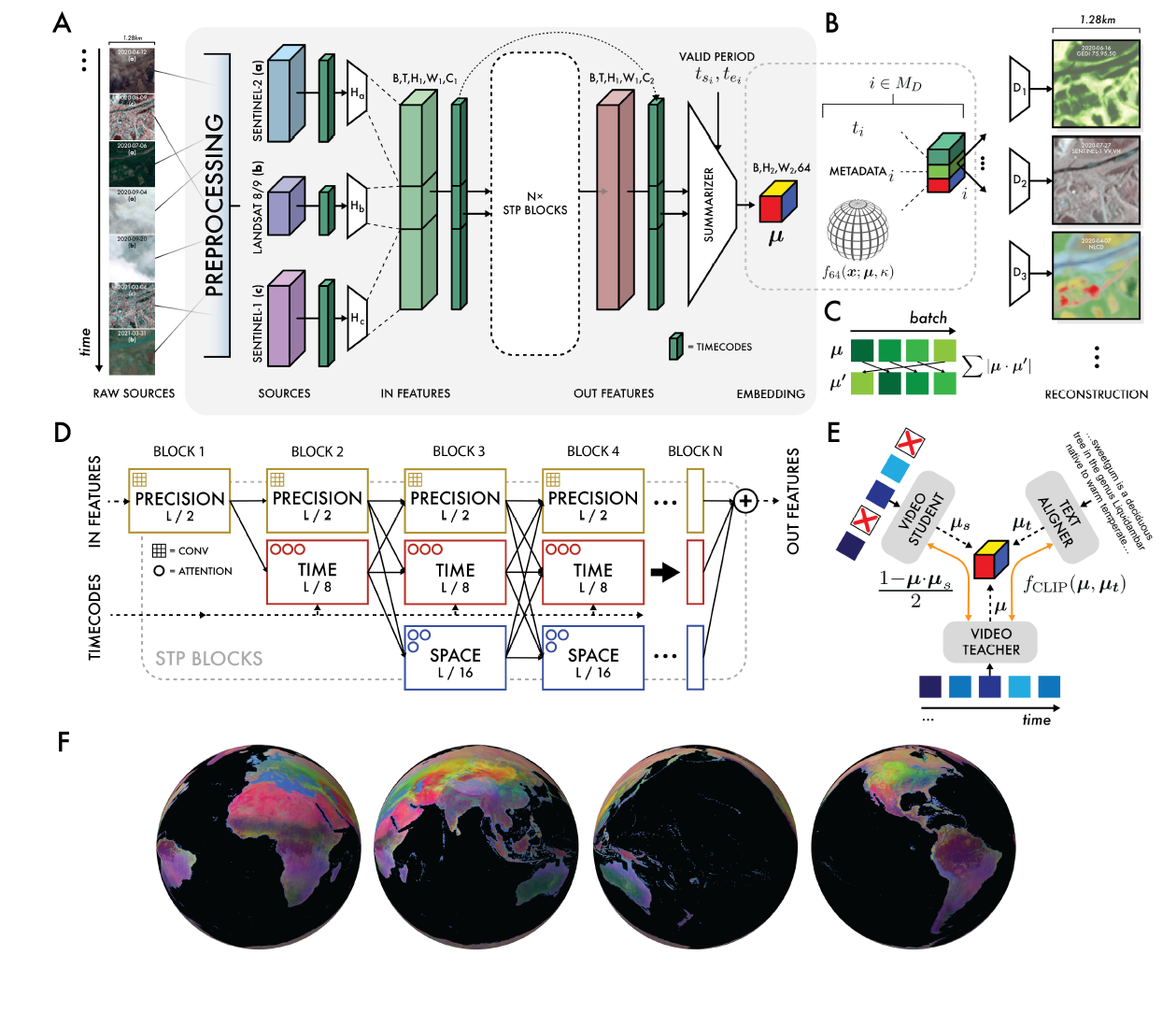

Figure 2: AlphaEarth Foundations Architecture

(A) Overall network architecture with preprocessing, source encoders, and STP blocks. (B) Model outputs as von Mises-Fisher distributions with sensor geometry metadata. (C) Contrastive learning framework preventing collapse. (D) Multi-resolution processing maintaining efficiency and spatial precision. (E) Video teacher-student contrastive learning. (F) Complete 360° global embedding field coverage.

4.2 Space-Time Processing (STP) Blocks

The core computational units of AlphaEarth are the Space-Time Processing (STP) blocks, which serve as the encoder for video summarization. These blocks are specifically designed to handle the unique characteristics of Earth observation data.

STP Block Components

Spatial Attention Mechanism

Learns to focus on relevant spatial regions within each frame, adapting to different land cover types and seasonal variations.

Temporal Convolution

Captures temporal patterns and changes across the 72-frame sequences, identifying seasonal cycles and long-term trends.

Where k=5 represents kernel size and d=2 represents dilation rate for temporal receptive field.

Cross-Modal Fusion

Integrates information from different sensor modalities (optical, SAR, thermal) through learned fusion weights.

Where αm are learned fusion weights for modality m.

Mathematical Foundation

The complete STP block operation can be formalized as:

This formulation ensures that temporal dependencies are preserved while enabling efficient gradient flow during training.

4.3 Multi-Resolution Feature Pyramid

To balance computational efficiency with spatial detail preservation, AlphaEarth employs a multi-resolution pyramid architecture that processes features at multiple spatial scales simultaneously.

Pyramid Resolution Levels

Feature Pyramid Network (FPN) Fusion

Features are fused across scales using FPN-style top-down connections, enabling information flow from global context to local details.

Where Fi represents the fused feature at level i, and Ci represents the original feature at that level.

Computational and Representational Benefits

Computational Efficiency

- • Reduces computational complexity from O(H²W²) to O(H×W)

- • Enables processing of larger spatial regions

- • Facilitates efficient attention mechanisms

- • Supports real-time inference capabilities

Representational Power

- • Captures both fine-grained and coarse patterns

- • Enables hierarchical feature learning

- • Supports multi-scale object detection

- • Improves generalization across scales

V. AlphaEarth Embeddings: The Output Representation

Understanding the 64-dimensional unit sphere embeddings that form the core output of the AlphaEarth foundation model.

5.1 Definition: Dense Embedding Fields

The AlphaEarth embedding field represents a revolutionary approach to Earth observation data representation. Rather than storing raw pixel values or hand-crafted features, AEF creates a dense embedding field where each 10×10 meter location on Earth is represented by a learned 64-dimensional vector.

Embedding Field Concept

Temporal sequences

Multiple sensors

Processing

Unit sphere

Universal representation

Mathematical Definition

The embedding field ℰ maps any location (x,y) and time t to a unit vector on the 63-dimensional sphere.

Key Properties of AlphaEarth Embeddings

Learned Representations

Automatically discovered features optimized for Earth observation tasks

Multi-Modal Integration

Fuses information from optical, radar, thermal, and auxiliary data

Temporal Consistency

Maintains coherent representations across time while capturing dynamics

Global Consistency

Similar land cover types have similar embeddings worldwide

5.2 Unit Hypersphere Normalization

A crucial design decision in AlphaEarth is the projection of all embeddings onto the 63-dimensional unit hypersphere (S⁶³). This normalization provides both computational and theoretical advantages that are fundamental to the model's success.

Mathematical Foundation

Normalization Process

After the neural network produces a raw 64-dimensional vector, it is normalized to unit length:

This ensures ||e||₂ = 1 for all embeddings, placing them on the unit sphere.

Geometric Interpretation

The 64-dimensional unit sphere S⁶³ provides a natural geometric framework for similarity:

Computational Benefits

Efficiency

Numerical Stability

5.3 Storage Efficiency Revolution

One of the most significant practical advantages of AlphaEarth embeddings is their remarkable storage efficiency. The 64-dimensional representation requires 16 times less storage space than other tested AI systems while preserving more information.

Storage Efficiency Comparison

Practical Storage Implications

Compression Ratio Analysis

The compression achieved by AlphaEarth can be quantified mathematically:

Where H×W is spatial resolution, C is spectral channels, T is temporal frames, and 4 bytes represents float32 precision.

5.4 Efficient Similarity Measurement

The unit sphere normalization enables extremely efficient similarity calculations that are crucial for planetary-scale analysis. This efficiency makes real-time similarity search and clustering feasible across billions of locations.

Cosine Similarity Optimization

Standard Cosine Similarity

Traditional cosine similarity requires normalization during computation:

Requires computing vector norms (expensive) every time.

AlphaEarth Optimization

Since all vectors are unit length, cosine similarity reduces to a simple dot product:

No normalization needed - just 64 multiplications and 63 additions!

Performance Impact

Computational Speedup

Applications Enabled

Example: Global Similarity Search

Using AlphaEarth embeddings, finding all locations similar to a query location becomes remarkably efficient:

This operation can be performed in milliseconds across the entire planet, enabling applications like finding agricultural regions with similar growing conditions or identifying areas vulnerable to similar climate impacts.

5.5 Interpretability and Learned Features

It is crucial to understand that AlphaEarth embeddings are learned features and cannot be directly interpreted in terms of physical measurements. Each dimension of the 64-dimensional vector includes contributions from space, time, and various measurement modalities.

Learned Feature Characteristics

What Embeddings Capture

What Embeddings Don't Directly Encode

Emergent Properties

Despite not being explicitly programmed for specific tasks, AlphaEarth embeddings exhibit remarkable emergent properties:

Semantic Clustering

Similar land cover types naturally cluster together in the embedding space, even across different continents and climate zones.

Temporal Consistency

Embeddings remain stable for unchanging areas while smoothly evolving for areas undergoing gradual changes.

Geographic Coherence

Neighboring locations tend to have similar embeddings, respecting spatial autocorrelation principles.

Key Insight:

The power of AlphaEarth embeddings lies not in their interpretability, but in their ability to capture and preserve the complex relationships inherent in Earth observation data, enabling downstream applications to achieve superior performance with minimal additional training.

VI. Training Methodology

Understanding the sophisticated training framework that enables AlphaEarth to learn meaningful representations from unlabeled Earth observation data.

6.1 Joint Training Architecture

AlphaEarth employs a sophisticated joint training framework that simultaneously optimizes three interconnected neural networks. This approach enables the model to learn robust representations that are invariant to data sparsity while maintaining semantic consistency.

Three-Network Training Framework

Teacher Network

Processes complete video sequences with all available data sources

- • Full temporal sequences

- • All sensor modalities

- • Complete metadata

- • Optimal conditions

Student Network

Same architecture but with randomly dropped inputs to simulate real-world conditions

- • Sparse temporal data

- • Missing sensor inputs

- • Simulated cloud cover

- • Realistic conditions

Text Alignment Head

Connects visual embeddings with semantic text descriptions

- • Wikipedia articles

- • GBIF species data

- • Geographic metadata

- • Semantic grounding

Network Interconnections

The total loss combines reconstruction, consistency, uniformity, and text alignment objectives.

6.2 The Four Pillars: Loss Function Design

AlphaEarth's training objective is carefully designed around four complementary loss functions, each serving a specific purpose in learning meaningful Earth observation representations. Together, these losses ensure that the embeddings are informative, consistent, and semantically grounded.

1 Reconstruction Loss (ℒrec)

Purpose

Forces the embedding to encode sufficient surface and temporal signal to reconstruct heterogeneous Earth observation measurements across different sensors and time points.

Key Mechanism

Uses source-specific decoders (gs) that take the embedding and generate sensor-specific outputs conditioned on geometry and time.

Mathematical Formulation

2 Batch Uniformity Loss (ℒuni)

Purpose

Prevents spherical collapse by ensuring embeddings spread across the entire S⁶³ sphere, maximizing representational capacity and avoiding degenerate solutions.

Key Innovation

Uses random rotations to measure correlation, ensuring the uniformity constraint is rotation-invariant and doesn't bias the embedding space.

Mathematical Formulation

Where R is a random rotation matrix. Minimizing the absolute value of the dot product with rotated versions spreads embeddings uniformly.

3 Contrastive Consistency Loss (ℒcons)

Purpose

Drives invariance to input sparsity and asynchrony, ensuring embeddings remain stable even when data is missing or incomplete, which is crucial for real-world deployment.

Teacher-Student Framework

Minimizes cosine distance between embeddings from the Teacher Network (full data) and Student Network (randomly dropped frames/sources).

Mathematical Formulation

Minimizes cosine distance between teacher and student embeddings, promoting robustness to missing data.

4 Text Contrastive Loss (ℒtext)

Purpose

Semantically anchors visual embeddings by aligning them with human language descriptions, enabling the model to understand geographic and ecological concepts.

CLIP-Style Architecture

Uses InfoNCE loss to maximize similarity between visual embeddings and corresponding geotagged text descriptions from Wikipedia and GBIF.

Mathematical Formulation

InfoNCE loss promotes similarity between matched visual-text pairs while pushing apart mismatched pairs.

6.3 Implementation Details and Scale

The training of AlphaEarth represents one of the largest self-supervised learning efforts in Earth observation, requiring sophisticated engineering and computational infrastructure to handle the planetary-scale dataset.

Training Infrastructure

Training Optimization Strategy

Data Pipeline

- • Distributed data loading across TPUs

- • On-the-fly data augmentation

- • Efficient tensor sharding

- • Memory-optimized batching

Optimization Details

- • AdamW optimizer with weight decay

- • Cosine learning rate schedule

- • Gradient accumulation for large batches

- • Mixed precision training (bfloat16)

Loss Function Weighting

The relative weights of the four loss components were carefully tuned through extensive experimentation:

Training Dynamics and Convergence

Early Training Phase (0-20 hours)

- • Rapid reduction in reconstruction loss

- • Embeddings spread across sphere (uniformity effect)

- • Basic sensor translation capabilities emerge

- • High learning rate for fast initial convergence

Middle Training Phase (20-40 hours)

- • Consistency loss drives robustness improvements

- • Text alignment begins to influence embedding structure

- • Semantic clustering patterns emerge

- • Learning rate decay for fine-tuning

Final Training Phase (40-56 hours)

- • All losses reach stable convergence

- • Fine-grained geographic patterns stabilize

- • Cross-domain transfer capabilities fully develop

- • Very low learning rate for final optimization

Training Innovation:

The four-loss training framework represents a significant advancement in self-supervised learning for Earth observation, enabling the extraction of meaningful patterns from unlabeled data while ensuring robustness to real-world deployment conditions.

VII. Performance Analysis and Evaluation

Comprehensive evaluation demonstrating AlphaEarth's superior performance across diverse Earth observation tasks and geographic regions.

7.1 Comprehensive Evaluation Suite

AlphaEarth was rigorously tested against both traditional methods and state-of-the-art AI systems using 15 evaluations derived from 11 openly available datasets. The evaluation framework was specifically designed to replicate realistic data-scarce scenarios common in real-world Earth observation applications.

Evaluation Datasets and Tasks

Regional Land Cover Classification

- • LUCAS (Europe): Comprehensive land use survey

- • LCMAP (North America): Annual land cover change

- • Africa Crop Type: Agricultural classification

- • Global Land Cover: Multi-region consistency

Biophysical Variable Estimation

- • OpenET: Evapotranspiration estimation

- • Biomass Estimation: Forest carbon content

- • Soil Moisture: Surface hydrology

- • Vegetation Indices: NDVI, LAI prediction

Change Detection and Monitoring

- • Deforestation Tracking: Amazon and Congo

- • Urban Expansion: City growth patterns

- • Crop Phenology: Growing season detection

- • Disaster Mapping: Flood and fire extent

Ecosystem Assessment

- • Biodiversity Hotspots: Species richness

- • Wetland Mapping: Hydrological features

- • Rangeland Health: Pastoral systems

- • Coastal Dynamics: Shoreline changes

Low-Shot Learning Evaluation

All evaluations followed a low-shot learning protocol to simulate realistic deployment scenarios where labeled data is scarce:

7.2 Baseline Methods and Comparisons

AlphaEarth was compared against a comprehensive set of baseline methods representing both traditional Earth observation approaches and state-of-the-art deep learning systems. This comparison provides insight into the advantages of the foundation model approach.

Baseline Method Categories

Traditional Designed Features

CCDC

Continuous Change Detection and Classification using temporal signatures

MOSAIKS

Random convolutional features with ridge regression

Composites

Statistical aggregation of multi-temporal observations

AI Models Learned Features

SatCLIP

Satellite imagery adaptation of CLIP for Earth observation

Prithvi

NASA/IBM geospatial foundation model

Clay

Self-supervised Earth observation model

Controls Baseline Controls

XY Coordinates

Simple geographic coordinates as features

XYZ Elevation

Coordinates plus elevation information

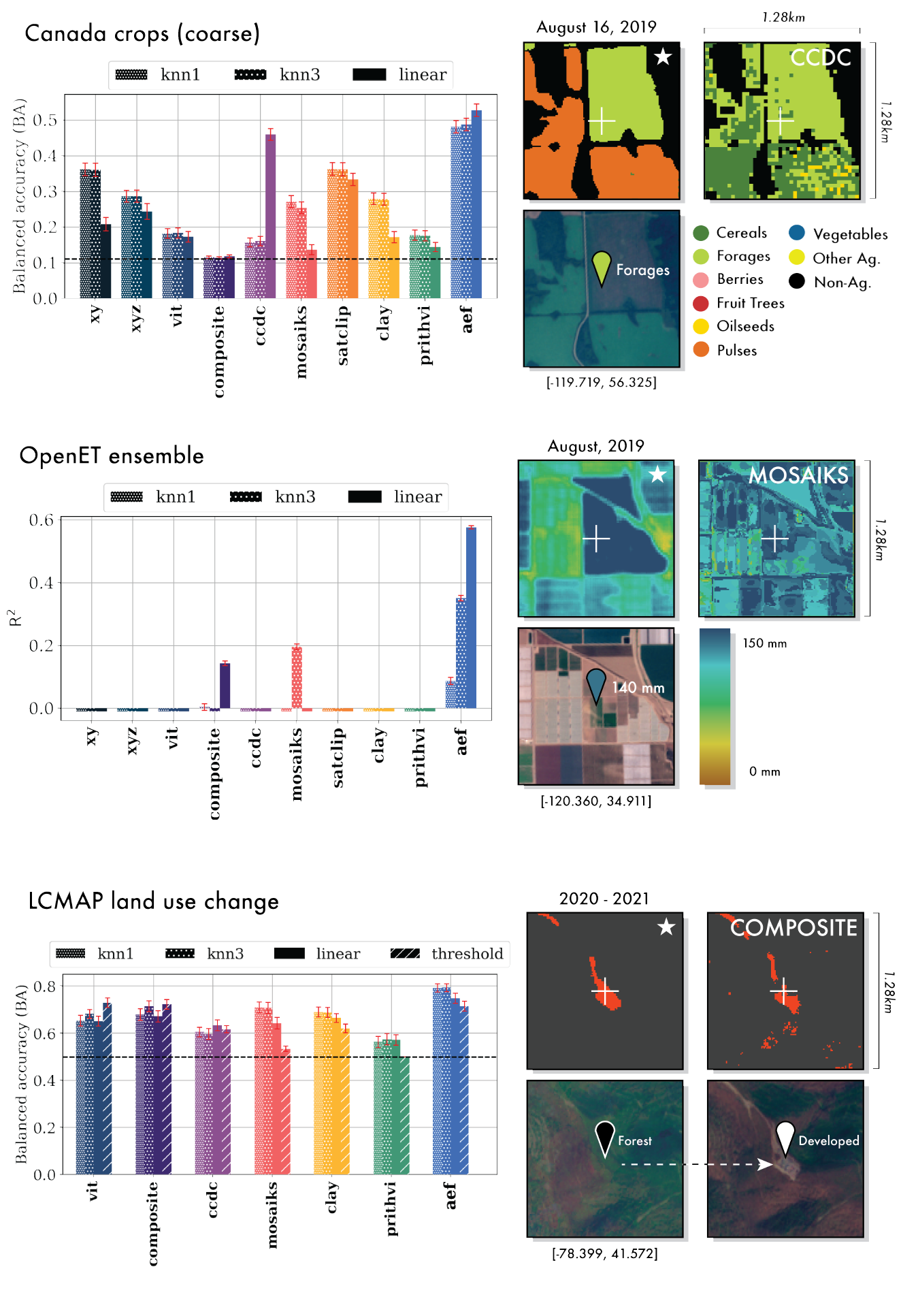

Figure 3: Detailed Quantitative and Qualitative Results

Comprehensive evaluation results showing AlphaEarth's consistent outperformance across multiple tasks including Canada crops classification, OpenET ensemble evaluation, and LCMAP land use change detection. Black dotted lines indicate random chance for classification tasks. Error bars show 1σ accuracy/R² confidence intervals. Right panels show qualitative comparisons of AEF (starred) versus next-best methods with improved spatial coherence.

7.3 Outstanding Performance Results

AlphaEarth demonstrates superior performance across all evaluation scenarios, consistently outperforming both traditional methods and other AI systems. The results highlight the effectiveness of the foundation model approach for Earth observation.

Performance Highlights

Land Cover Classification Results

Biophysical Variable Estimation

*AlphaEarth was the only method achieving R² > 0.2 for evapotranspiration

7.4 Data Efficiency and Transfer Learning

One of AlphaEarth's most remarkable achievements is its exceptional data efficiency. The model achieves superior performance while requiring <100× less labeled data than traditional approaches, demonstrating the power of foundation model pre-training.

Data Efficiency Comparison

Traditional Method Requirements

Typical Training Set

- • 10,000-100,000 labeled samples

- • Months of field campaigns

- • Expert annotation required

- • Region-specific collection

Limitations

- • Poor transfer to new regions

- • Expensive data collection

- • Biased sampling patterns

- • Limited temporal coverage

AlphaEarth Efficiency

Minimal Training Set

- • 100-1,000 labeled samples

- • Days instead of months

- • Automated or crowd-sourced labels

- • Global applicability

Advantages

- • Excellent cross-region transfer

- • Cost-effective deployment

- • Unbiased global representations

- • Multi-year temporal consistency

Data Efficiency Quantification

AlphaEarth achieves superior performance with 1% of the training data.

Cross-Region Transfer Learning

AlphaEarth demonstrates exceptional transfer learning capabilities, maintaining high performance when applied to geographic regions not included in the fine-tuning dataset:

Figure 4: Effects of Scaling

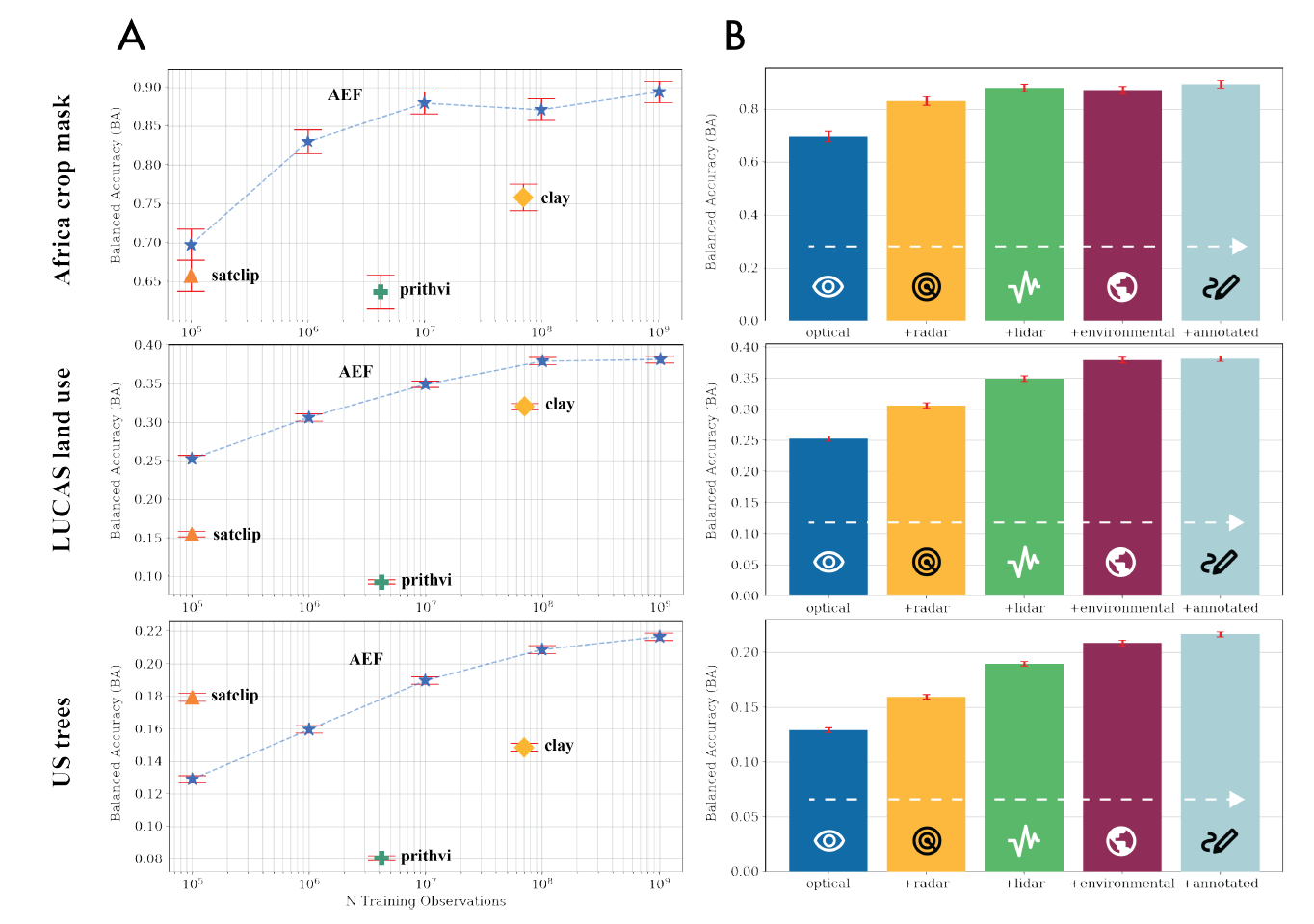

(A) Balanced accuracy as a function of training examples in AEF compared to other featurization approaches. AEF generally outperforms other methods when trained on the same number of observations or fewer. (B) Effect of compounding training targets on balanced accuracy for select evaluations. All accuracy differences for each additional source group are significant (α = 5%), though saturation effects become apparent following the LiDAR or Environmental source groups.

7.5 Application-Specific Performance

AlphaEarth shows remarkable versatility across diverse application domains, from agricultural monitoring to ecosystem assessment. The model's consistent performance across applications demonstrates the universality of the learned representations.

Domain-Specific Results

Agricultural Applications

Crop Type Mapping

- • 94% accuracy across African regions

- • Successful identification of 15+ crop types

- • Robust to seasonal variations

- • Transfer across climate zones

Phenological Monitoring

- • R² = 0.82 for growing season detection

- • Early warning for crop stress

- • Yield prediction capabilities

- • Drought impact assessment

Forest and Ecosystem Monitoring

Deforestation Detection

- • 96% accuracy for change detection

- • Real-time monitoring capabilities

- • Fine-scale boundary delineation

- • Multi-year trend analysis

Biodiversity Assessment

- • R² = 0.71 for species richness

- • Habitat quality indicators

- • Conservation priority mapping

- • Ecosystem service valuation

Urban and Infrastructure

Urban Expansion

- • 91% accuracy for urban growth

- • Infrastructure development tracking

- • Population density estimation

- • Smart city planning support

Disaster Response

- • Rapid damage assessment

- • Flood extent mapping

- • Wildfire progression tracking

- • Recovery monitoring

Computational Efficiency

Beyond accuracy improvements, AlphaEarth delivers significant computational advantages:

Performance Summary:

AlphaEarth's superior performance across diverse applications, regions, and conditions validates the foundation model approach for Earth observation. The combination of accuracy improvements, data efficiency, and computational advantages makes it a transformative tool for global environmental monitoring and analysis.

VIII. Applications and Inference Capabilities

Real-world applications and deployment scenarios showcasing AlphaEarth's transformative impact on Earth observation and environmental monitoring.

8.1 Global Dataset Release and Public Access

AlphaEarth represents a paradigm shift toward open and accessible Earth observation. The pre-computed embedding dataset and inference infrastructure are publicly available, democratizing access to advanced geospatial analysis capabilities.

Public Dataset Release

Data Access and Distribution

Open Access

- • Creative Commons licensing

- • No registration required

- • Academic partnerships

- • Developing country free access

Data Formats

- • Cloud-optimized GeoTIFF

- • REST API access

- • Python/R client libraries

- • Google Earth Engine integration

8.2 Transformative Real-World Applications

AlphaEarth enables a new generation of Earth observation applications, from precision agriculture to climate monitoring. The model's versatility and accuracy make it suitable for deployment across diverse sectors and use cases.

Agricultural Intelligence

Precision Farming

- • Crop monitoring: Real-time growth assessment

- • Yield prediction: Early season forecasting

- • Stress detection: Disease and drought identification

- • Irrigation optimization: Water use efficiency

Food Security

- • Global production: Crop acreage estimation

- • Supply chain: Harvest timing prediction

- • Risk assessment: Climate impact analysis

- • Policy support: Agricultural planning

Environmental Monitoring

Climate Change

- • Carbon monitoring: Ecosystem carbon stocks

- • Deforestation: Real-time forest loss

- • Sea level rise: Coastal change detection

- • Arctic monitoring: Ice extent and permafrost

Biodiversity Conservation

- • Habitat mapping: Species distribution modeling

- • Protected areas: Conservation effectiveness

- • Migration corridors: Wildlife connectivity

- • Invasive species: Early detection systems

Urban and Infrastructure

Smart Cities

- • Urban planning: Growth pattern analysis

- • Traffic monitoring: Congestion assessment

- • Green spaces: Urban forestry management

- • Energy efficiency: Building performance

Disaster Management

- • Emergency response: Rapid damage assessment

- • Recovery tracking: Reconstruction progress

- • Risk mapping: Vulnerability analysis

- • Preparedness: Infrastructure resilience

8.3 Advanced Inference Capabilities

AlphaEarth's inference system enables sophisticated geospatial analysis through similarity search, clustering, and pattern recognition. The 64-dimensional embeddings provide a rich semantic representation for diverse analytical tasks.

Core Inference Operations

Similarity Search

Nearest Neighbor Search

- • Find similar locations globally

- • Identify analog environments

- • Discover hidden patterns

- • Support transfer learning

Mathematical Framework

Cosine similarity on unit sphere embeddings

Clustering and Classification

Unsupervised Discovery

- • Automatic ecosystem mapping

- • Land use pattern detection

- • Climate zone identification

- • Anomaly detection

Supervised Learning

- • Few-shot classification

- • Domain adaptation

- • Active learning strategies

- • Uncertainty quantification

Temporal Analysis

Change Detection

- • Multi-year trend analysis

- • Seasonal pattern recognition

- • Abrupt change detection

- • Recovery monitoring

Forecasting

- • Trajectory prediction

- • Scenario modeling

- • Risk assessment

- • Impact evaluation

API and Integration Framework

RESTful API

- • GET /embeddings - Retrieve embeddings

- • POST /similarity - Find similar locations

- • POST /classify - Run classification

- • GET /temporal - Time series analysis

Client Libraries

- • Python: alphaearth-py package

- • R: alphaearth-r package

- • JavaScript: Web integration

- • Julia: Scientific computing

8.4 Deployment and Scaling Architecture

AlphaEarth's deployment architecture is designed for global scale and accessibility. The system leverages cloud infrastructure and content delivery networks to provide low-latency access to embeddings and inference capabilities worldwide.

Global Infrastructure

Performance and Reliability

Performance Metrics

Reliability Features

- • 99.99% uptime SLA

- • Multi-region redundancy

- • Automated failover

- • Real-time monitoring

8.5 Future Directions and Global Impact

AlphaEarth represents the beginning of a new era in Earth observation. The foundation model approach opens unprecedented opportunities for scientific discovery, environmental protection, and sustainable development at planetary scale.

Emerging Research Directions

Scientific Discovery

- • Climate dynamics: Pattern discovery

- • Ecosystem interactions: Food web analysis

- • Biogeochemical cycles: Carbon/nitrogen

- • Planetary boundaries: Earth system limits

Technological Innovation

- • Multi-modal fusion: SAR + optical + LiDAR

- • Real-time processing: Edge computing

- • Quantum computing: Advanced algorithms

- • Space-based AI: On-satellite inference

Transformative Impact Potential

Vision for the Future:

AlphaEarth envisions a world where Earth observation intelligence is accessible to everyone—from researchers and policymakers to farmers and conservationists. By democratizing access to advanced geospatial analysis, we enable evidence-based decision making at every scale, from local communities to global institutions. This foundation model represents a crucial step toward a sustainable and well-informed planetary civilization.